Blog

Star Light, Entropy Right

15 January 2024

One of the central factors in the evolution of galaxies is the rate at which stars form. Some galaxies are in a period of active star formation, while others have very little new stars. Very broadly, it’s thought that younger galaxies enter a period of rapid star formation before leveling off to become a mature galaxy. But a new study finds some interesting things about just when and why stars form.1

The study looked at a type of galactic cluster known as Brightest Cluster Galaxies (BCGs), which are the largest and brightest galaxy clusters we can see. In this case, the team identified the 95 brightest clusters as seen from the South Pole Telescope (SPT). These galaxies are at redshifts ranging from z = 0.3 to z = 1.7, which spans the period of the Universe from 3.5 to 10 billion years ago.

That’s a good chunk of cosmic time, so you would think the data would show how star formation changed over time. At a high rate when galaxies were young and there was plenty of gas and dust around, then at a low rate after much of that raw material had been consumed. But what the team found was that within these clusters star formation was remarkably consistent across billions of years. They also found the key to when star formation occurs: entropy.

Entropy is a subtle and often misunderstood concept in physics. It is often described as the level of disorder in a system, where the entropy of a broken cup is higher than that of an unbroken cup. Since entropy always increases, you never see a cup spontaneously unbreak itself. But in reality, entropy can describe a range of things, from the flow of heat to the information required to describe a system.

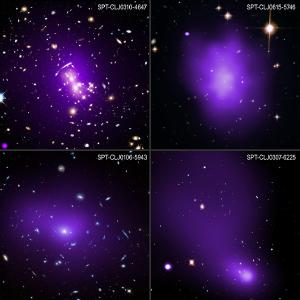

X-ray: NASA/CXC/MIT/M. Calzadilla el al.; Optical: NASA/ESA/STScI; Image Processing: NASA/CXC/SAO/N. Wolk & J. Major

X-ray: NASA/CXC/MIT/M. Calzadilla el al.; Optical: NASA/ESA/STScI; Image Processing: NASA/CXC/SAO/N. Wolk & J. MajorOne of the things to keep in mind is that within a region of space, the entropy can decrease, as other areas increase. The most common example is your refrigerator. The interior of your fridge can be much cooler than the rest of your kitchen because electrical power pumps heat away from it. The same is true for life on Earth. Living things have a relatively low entropy, which is possible thanks to the energy we get from the Sun. A similar effect can occur within galaxy clusters. As gas and dust collapses on itself thanks to gravity, the entropy within can decrease. The material becomes denser and cooler over time, and thus stars can begin to form.

At first glance, this seems obvious. Of course stars can form when there is plenty of cool gas and dust around. That’s how it works. But what the team found is that there isn’t a specific temperature or density at which stars form. These factors play off each other in various ways, but the key is the overall entropy. Once the entropy within the cluster drops below a critical level, stars begin to form. They found that this critical level can be reached across billions of years, which is why star formation in all these clusters is so remarkably stable.

It’s an important result because it shows that rather than finding just how much gas and dust there is within a galaxy, or whether it’s at a sufficiently cool temperature, we only need to quantify the entropy of a galaxy. And when that entropy is just right, new stars will shine.

Calzadilla, Michael S., et al. “The SPT-Chandra BCG Spectroscopic Survey I: Evolution of the Entropy Threshold for Cooling and Feedback in Galaxy Clusters Over the Last 10 Gyr.” arXiv preprint arXiv:2311.00396 (2023). ↩︎